With the release of VMware Cloud Foundation 4.4, we now support 2-node clusters.

The requirements are as follows:

• vSphere Lifecycle Management Images must be used. vLCM Baseline mode is not supported.

• Principal storage must be one of the following: VMFS on FC, vVol or NFS.

vSAN is not supported for 2-node clusters.

You can deploy a 2-node cluster in a new workload domain, or to an existing workload domain. The vSphere Lifecycle Management mode is determined on a workload domain level, which means that if there is an existing workload domain using vLCM Baseline (“VUM”) mode, it can not host 2-node clusters.

Below is a graphical how-to, if you want the code for an API based deployment you can skip to the end of this post, or by clicking here.

How-to

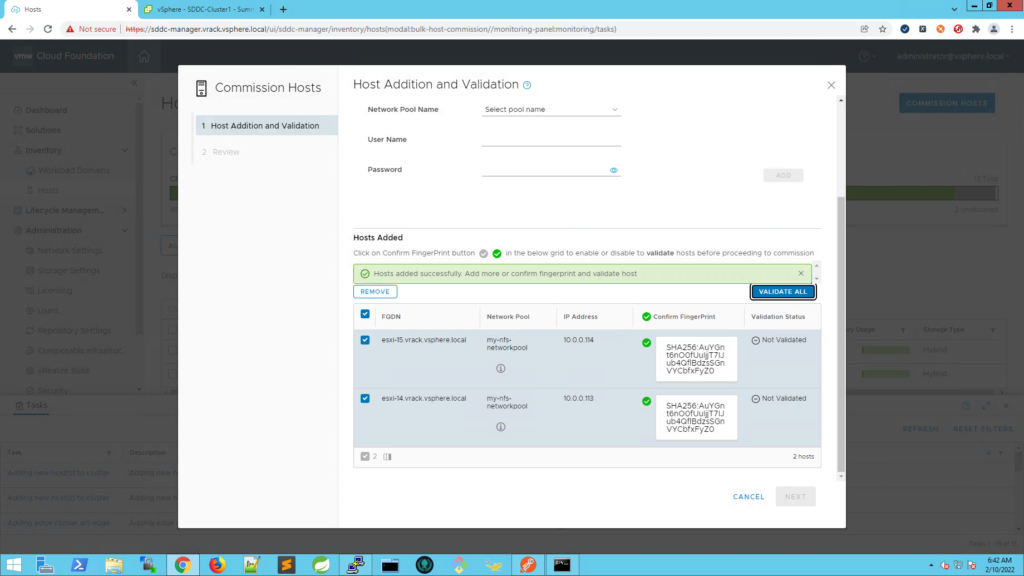

Start by commissioning additional hosts, if you already have unassigned hosts you may skip this step.

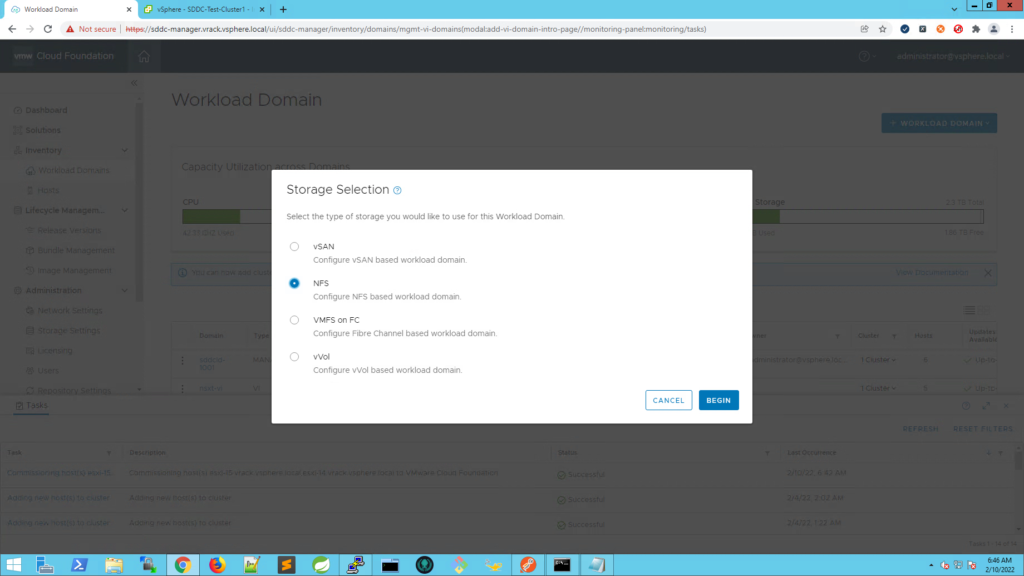

Once the hosts are in an unassigned state, you can start the VI Workload Domain deployment workflow. I’m going to use NFS in my lab.

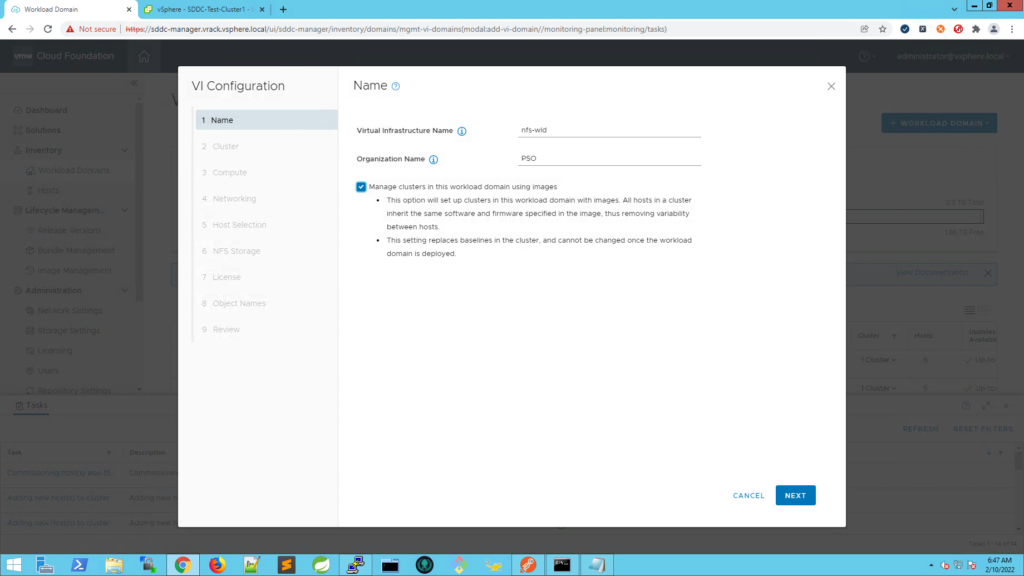

Enter the Virtual Infrastructure Name and Organization Name and tick the box to use vLCM Images for this workload domain.

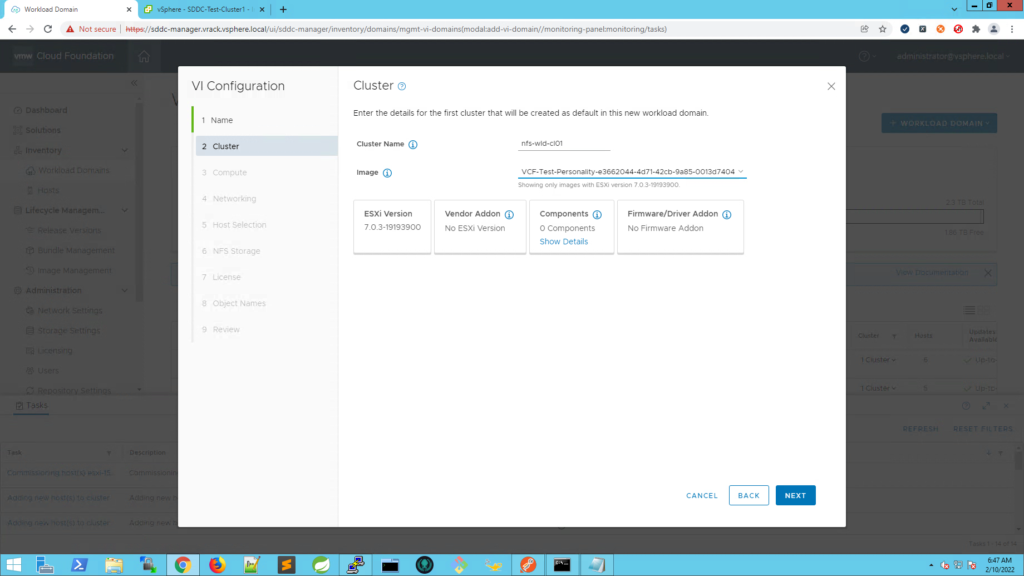

Give the cluster a name and select which vLCM Image to use for this cluster.

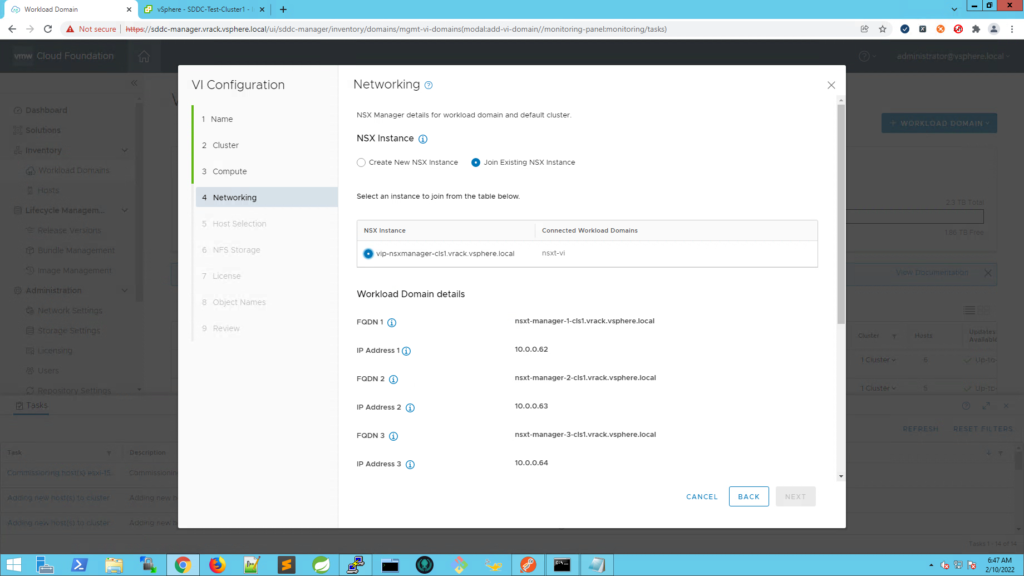

Specify the NSX-T networking details. For the purpose of this exercise I will re-use an existing NSX Instance. Otherwise, enter the DNS records for the three NSX-T Managers, the VIP and the VLAN ID for the overlay network.

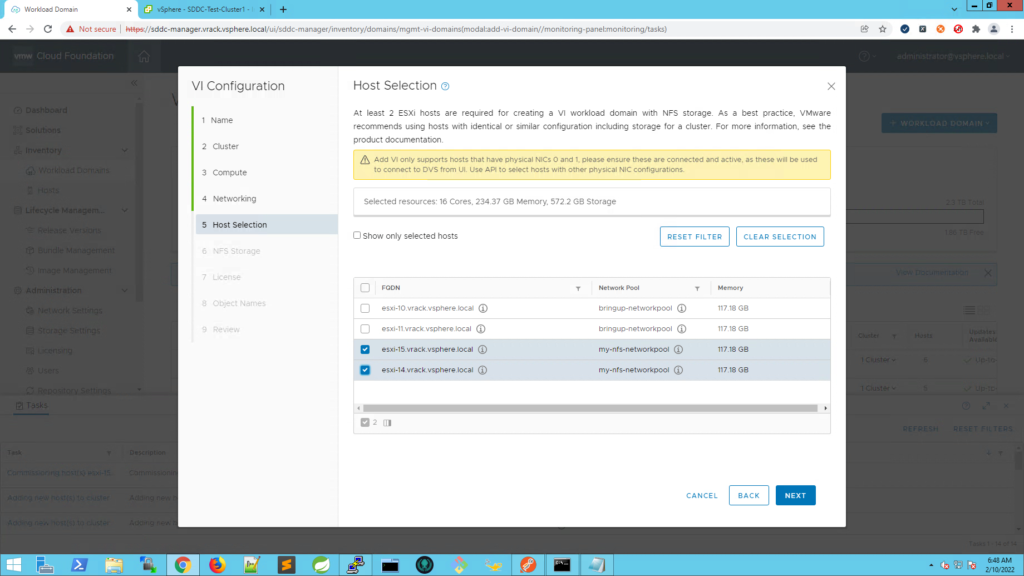

Select the hosts to use for this new 2-node cluster.

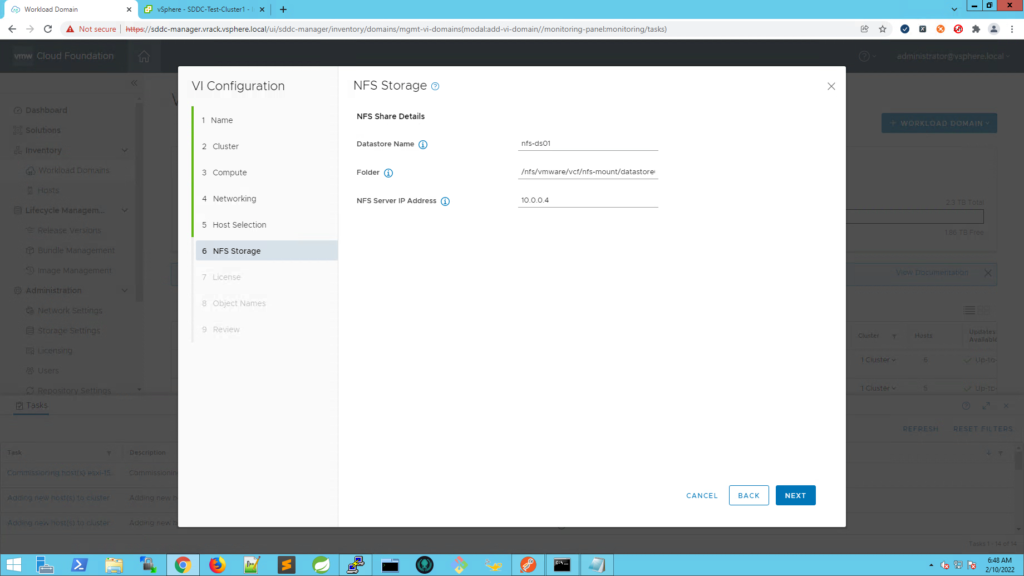

Specify the datastore information.

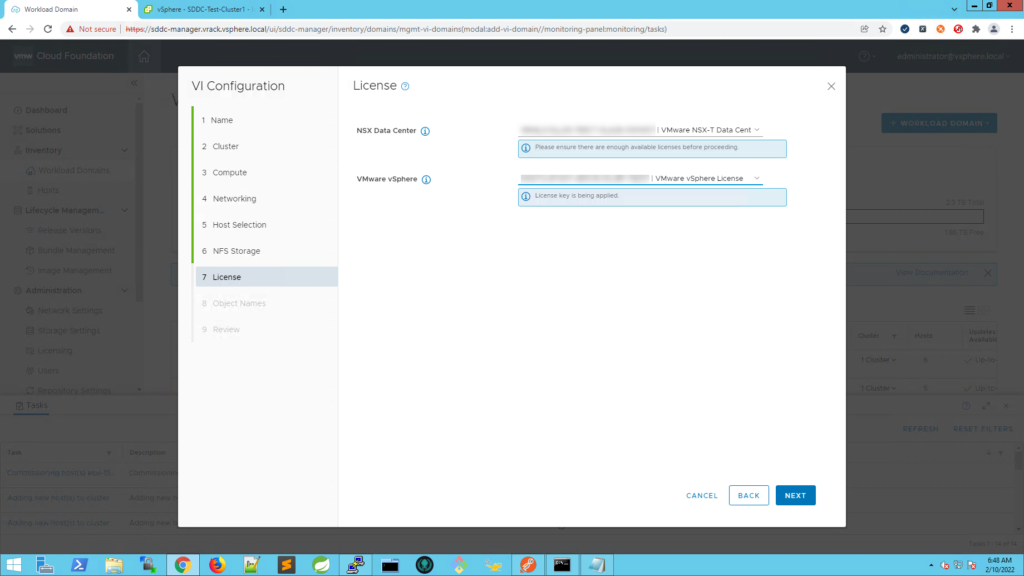

Select the licenses to apply.

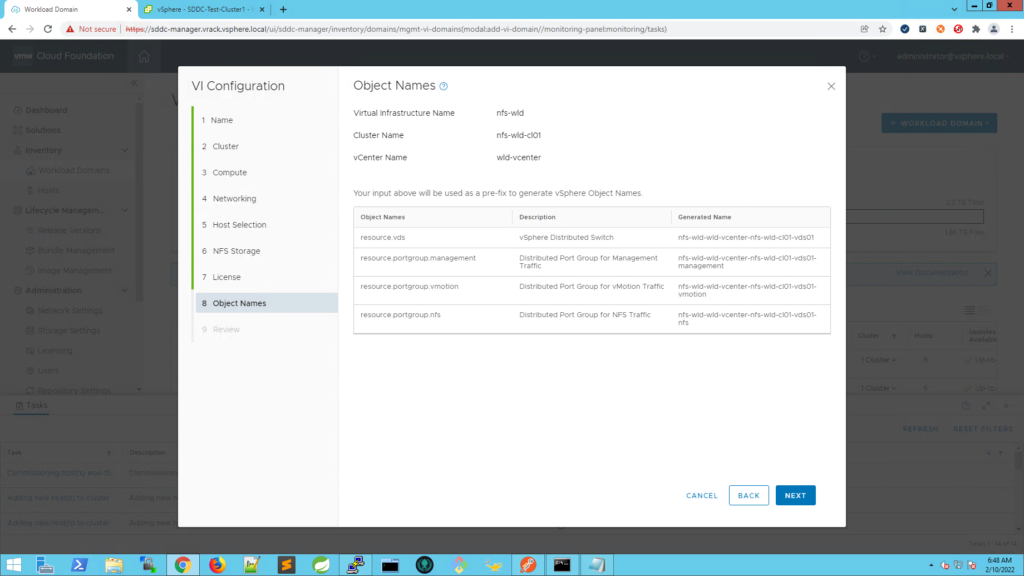

Review the object names. When deploying using the SDDC Manager UI, you can not control the object names. An API based deployment must be used in order to control these.

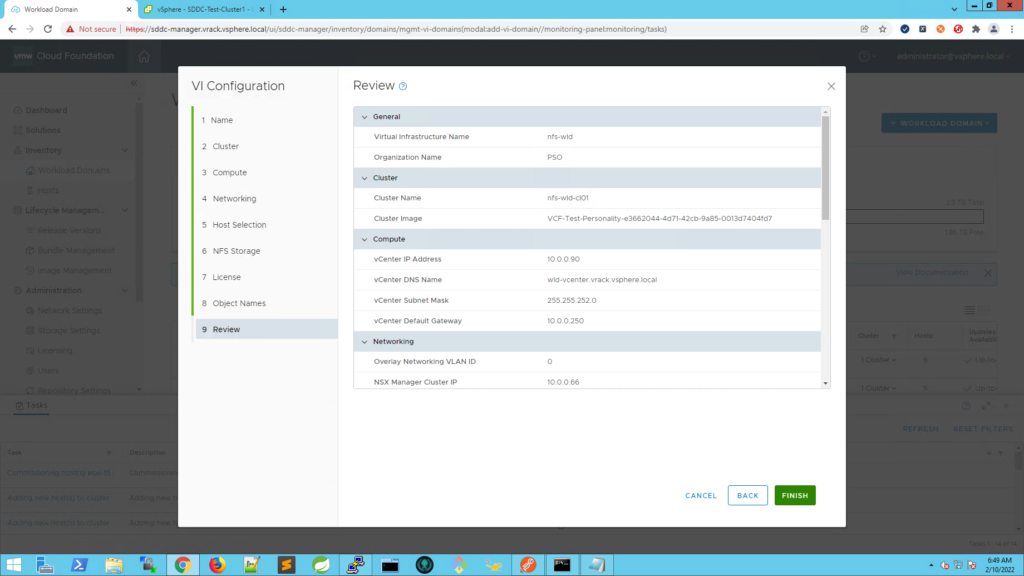

Review the summary and press finish.

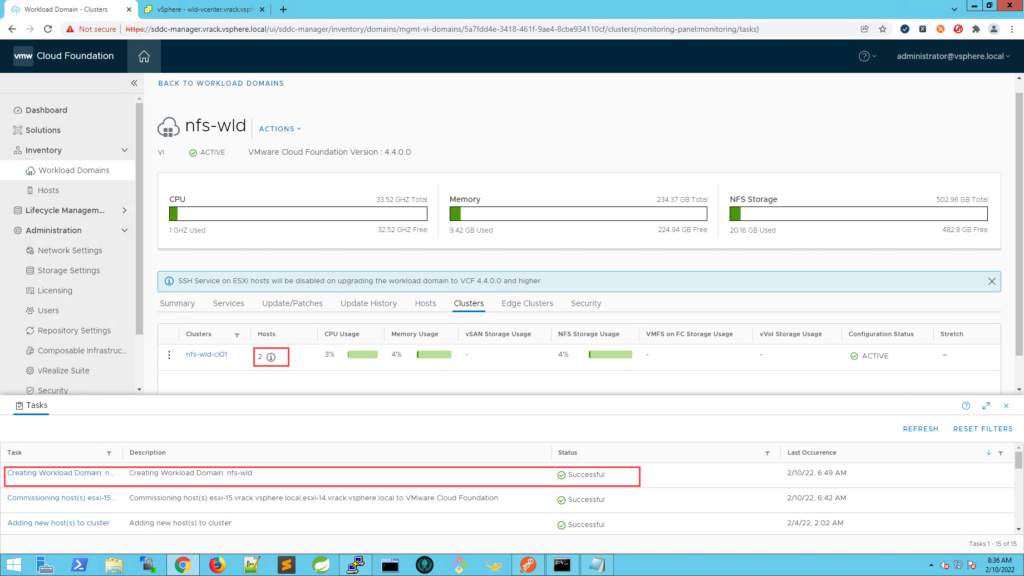

Once the task succeeds, we can see that we have a new workload domain deployed, with a single cluster consisting of 2 nodes.

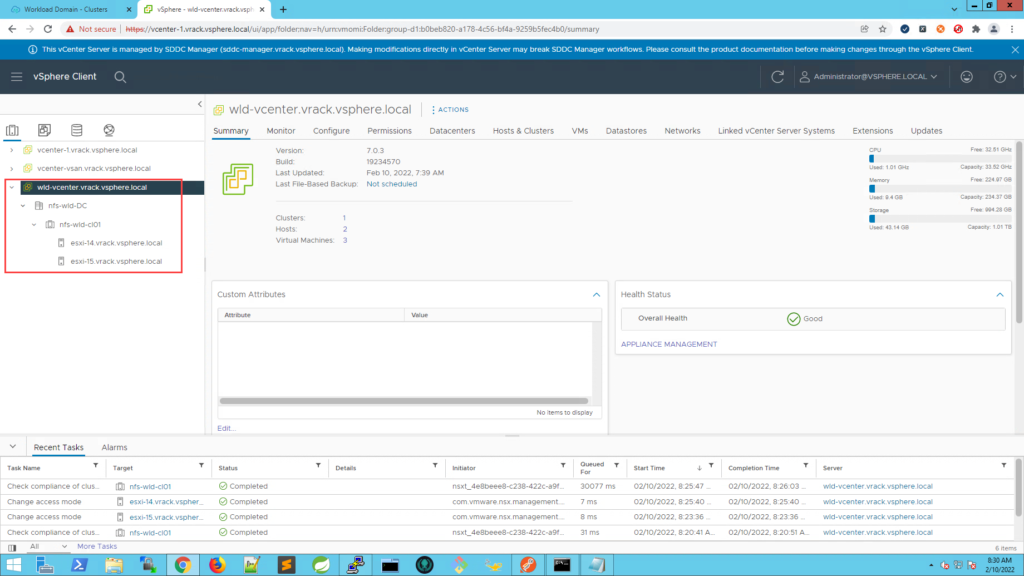

View from vSphere Client

API Based Deployment

If you’d rather do an API based deployment, you can use this json to deploy a new Workload Domain with a 2-node cluster:

{

"domainName": "sfo-w01",

"orgName": "rainpole",

"vcenterSpec": {

"name": "sfo-w01-vc01",

"networkDetailsSpec": {

"ipAddress": "172.16.11.64",

"dnsName": "sfo-w01-vc01.sfo.rainpole.io",

"gateway": "172.16.11.1",

"subnetMask": "255.255.255.0"

},

"rootPassword": "vcenter_server_root_password",

"datacenterName": "sfo-w01-dc01"

},

"computeSpec": {

"clusterSpecs": [{

"clusterImageId": "guid_of_vlcm_image",

"name": "sfo-w01-cl01",

"hostSpecs": [{

"id": "guid_of_esxi_host",

"licenseKey": "vsphere_esxi_license_key",

"hostNetworkSpec": {

"vmNics": [{

"id": "vmnic0",

"vdsName": "sfo-w01-cl01-vds01"

}, {

"id": "vmnic1",

"vdsName": "sfo-w01-cl01-vds01"

}]

}

},

{

"id": "guid_of_esxi_host",

"licenseKey": "vsphere_esxi_license_key",

"hostNetworkSpec": {

"vmNics": [{

"id": "vmnic0",

"vdsName": "sfo-w01-cl01-vds01"

}, {

"id": "vmnic1",

"vdsName": "sfo-w01-cl01-vds01"

}]

}

}

],

"datastoreSpec": {

"nfsDatastoreSpecs": [{

"nasVolume": {

"serverName": ["10.0.0.250"],

"path": "/nfs_mount/my_read_write_folder",

"readOnly": false

},

"datastoreName": "sfo-w01-cl01-ds-nfs01"

}]

},

"networkSpec": {

"vdsSpecs": [{

"name": "sfo-w01-cl01-vds01",

"isUsedByNsxt": true,

"portGroupSpecs": [{

"name": "sfo-w01-cl01-vds01-pg-mgmt",

"transportType": "MANAGEMENT"

},

{

"name": "sfo-w01-cl01-vds01-pg-vmotion",

"transportType": "VMOTION"

}

]

}],

"nsxClusterSpec": {

"nsxTClusterSpec": {

"geneveVlanId": 1634

}

}

}

}]

},

"nsxTSpec": {

"nsxManagerSpecs": [{

"name": "sfo-w01-nsx01a",

"networkDetailsSpec": {

"ipAddress": "172.16.11.76",

"dnsName": "sfo-w01-nsx01a.sfo.rainpole.io",

"gateway": "172.16.11.1",

"subnetMask": "255.255.255.0"

}

},

{

"name": "sfo-w01-nsx01b",

"networkDetailsSpec": {

"ipAddress": "172.16.11.77",

"dnsName": "sfo-w01-nsx01b.sfo.rainpole.io",

"gateway": "172.16.11.1",

"subnetMask": "255.255.255.0"

}

},

{

"name": "sfo-w01-nsx01c",

"networkDetailsSpec": {

"ipAddress": "172.16.11.78",

"dnsName": "sfo-w01-nsx01c.sfo.rainpole.io",

"gateway": "172.16.11.1",

"subnetMask": "255.255.255.0"

}

}

],

"vip": "172.16.11.75",

"vipFqdn": "sfo-w01-nsx01.sfo.rainpole.io",

"licenseKey": "nsxt_datacenter_enterprise_license_key",

"nsxManagerAdminPassword": "nsxt_manager_admin_password"

}

}

If you only want to deploy a 2-node cluster to an existing Workload Domain:

{

"domainId": "guid_of_workload_domain",

"computeSpec": {

"clusterSpecs": [{

"clusterImageId": "guid_of_vlcm_image",

"name": "sfo-w01-cl02",

"hostSpecs": [{

"id": "guid_of_esxi_host",

"licenseKey": "vsphere_esxi_license_key",

"username": "root",

"hostNetworkSpec": {

"vmNics": [{

"id": "vmnic0",

"vdsName": "sfo-w01-cl02-vds01"

}, {

"id": "vmnic1",

"vdsName": "sfo-w01-cl02-vds01"

}]

}

}, {

"id": "guid_of_esxi_host",

"licenseKey": "vsphere_esxi_license_key",

"username": "root",

"hostNetworkSpec": {

"vmNics": [{

"id": "vmnic0",

"vdsName": "sfo-w01-cl02-vds01"

}, {

"id": "vmnic1",

"vdsName": "sfo-w01-cl02-vds01"

}]

}

}],

"datastoreSpec": {

"nfsDatastoreSpecs": [{

"nasVolume": {

"serverName": ["10.0.0.250"],

"path": "/nfs_mount/my_read_write_folder",

"readOnly": false

},

"datastoreName": "sfo-w01-cl02-ds-nfs01"

}]

},

"networkSpec": {

"vdsSpecs": [{

"isUsedByNsxt": true,

"name": "sfo-w01-cl02-vds01",

"portGroupSpecs": [{

"name": "sfo-w01-cl02-vds01-pg-mgmt",

"transportType": "MANAGEMENT"

}, {

"name": "sfo-w01-cl02-vds01-pg-vmotion",

"transportType": "VMOTION"

}]

}],

"nsxClusterSpec": {

"nsxTClusterSpec": {

"geneveVlanId": 1634

}

}

},

"advancedOptions": {

"evcMode": "",

"highAvailability": {

"enabled": true

}

}

}],

"skipFailedHosts": false

}

}

Good stuff. Version 4.3.1 supports 2-node clusters as well but only as an API based deployment.

Any changes happened for this in VCF 4.5 or 5.0? i.e. support for 2-node in baseline-based workload-domains?

Hi Andre,

There are no changes in VCF 5 in regards to this. vLCM image mode is still required. vLCM Baseline mode is actually deprecated in vSphere 8, you can read about it in the vSphere 8 release notes; https://docs.vmware.com/en/VMware-vSphere/8.0/rn/vmware-vsphere-80-release-notes/index.html

Work is ongoing to only use image mode with VCF.